Introduction

A few months ago Microsoft released the Azure OpenAI Chat Solution Accelerator.

The accelerator is to help organisations fast track and simplify their adoption of the Azure OpenAI service by giving an out the box private chat solution that gives you a familiar user experience.

This is enabling your organisation to have your own ‘https://chat.yourorg.co.uk’ style application that your employees can harness the epic capabilities Azure OpenAI has to offer.

Deploying Azure OpenAI Chat

Deploying can be done quite simply by following the docs on the GitHub page here:

azurechat/docs/4-deploy-to-azure.md at main · microsoft/azurechat (github.com)

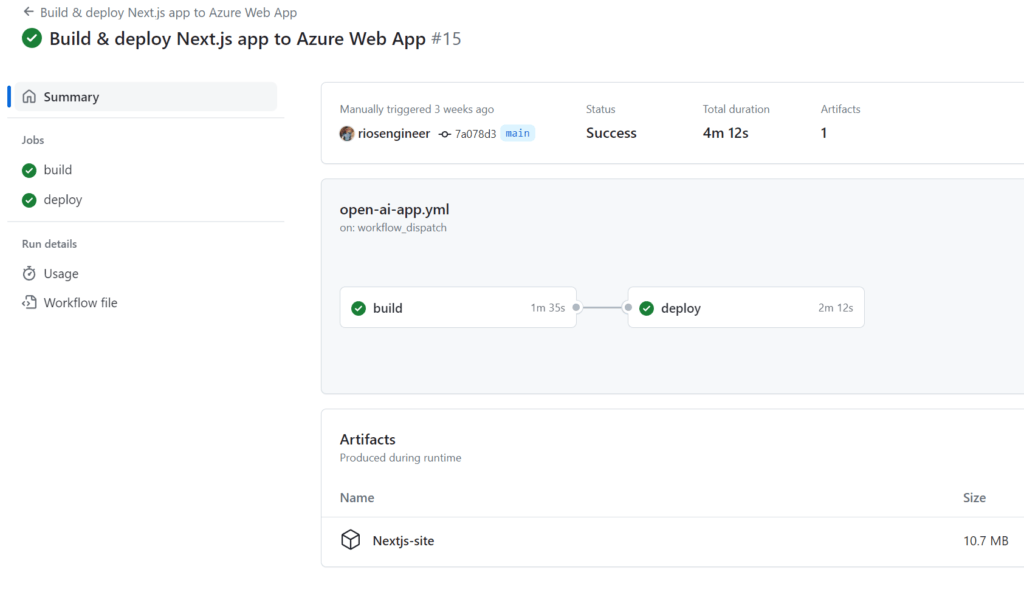

The easiest way to deploy is forking the azurechat GitHub repository and running the GitHub Action to build & deploy the Azure OpenAI Chat Accelerator app.

However, before you go ahead and do that you’ll need to follow the docs to setup the pre-requisites and deploy the Azure resources. At a high level:

- Follow the docs to: setup an identity provider (Github or Azure via App Registration);

- Deploy the ARM/Bicep template to deploy the Azure resources;

- Configure the GitHub repository secret and web app variable name

- Run the GitHub Action in your forked repo

- Confirm the Web App configuration > App Settings values for Azure tenant id, app id and secret are correct

- Test login & chat via the web URL from the azure web app

What’s deployed in Azure OpenAI Chat Accelerator?

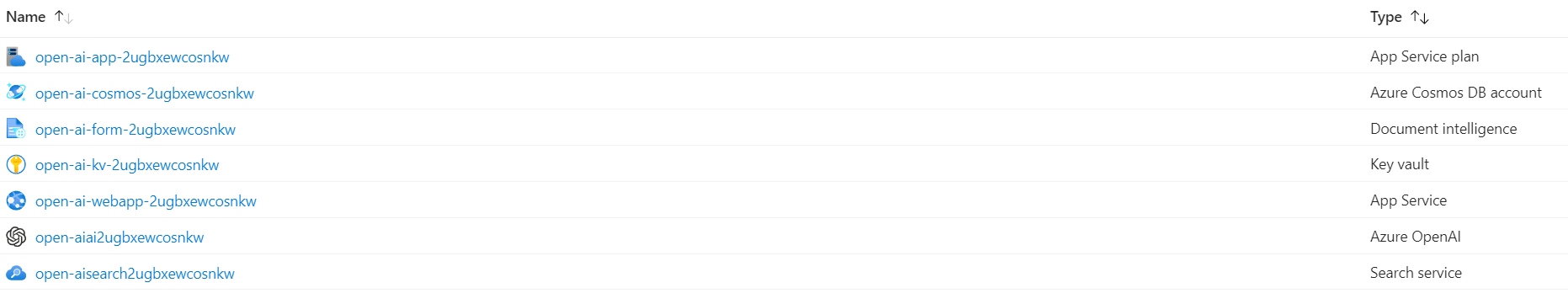

Out the box you’ll get the following resources deployed to the resource group:

- App plan

- Cosmos DB

- Document Intelligence service

- Key vault

- App Service (Azure OpenAI Chat web app)

- Azure OpenAI

- Search service

At the time of writing, I had to turn App Insights on myself but this could easily be added to the template deployment for your needs.

All the chat history is saved into the Azure Cosmos DB.

Login to your Azure Chat Accelerator app landing page:

Models

Currently the following models are deployed to the Azure OpenAI Chat Accelerator app:

- Embedding – this is for text, code and sentence tasks

- ChatGPT 35 Turbo – The GPT chat model we’re probably all familiar with now

- ChatGPT 4 & ChatGPT 4 32k – you can select these during deployment

What can it actually do out the box and it’s benefits?

Firstly, this is a private chat solution for your organisation. You’ll be able to give it a custom domain for internal use only, for example https://chat.yourorg.com.

There also is some potential confusion and overlap between what the Azure OpenAI Chat Solution can offer over Bing Enterprise or Copilot.

The focus around the app seems to be more for accelerating getting your private bot up and running, if you want to integrate with Azure SQL, Blob storage, etc, you need to start doing a bit more tweaking.

The main benefits out the box here is that you have your own private chat solution that can:

- Dall-E image generation & all the usual benefits of having the GPT model to talk with

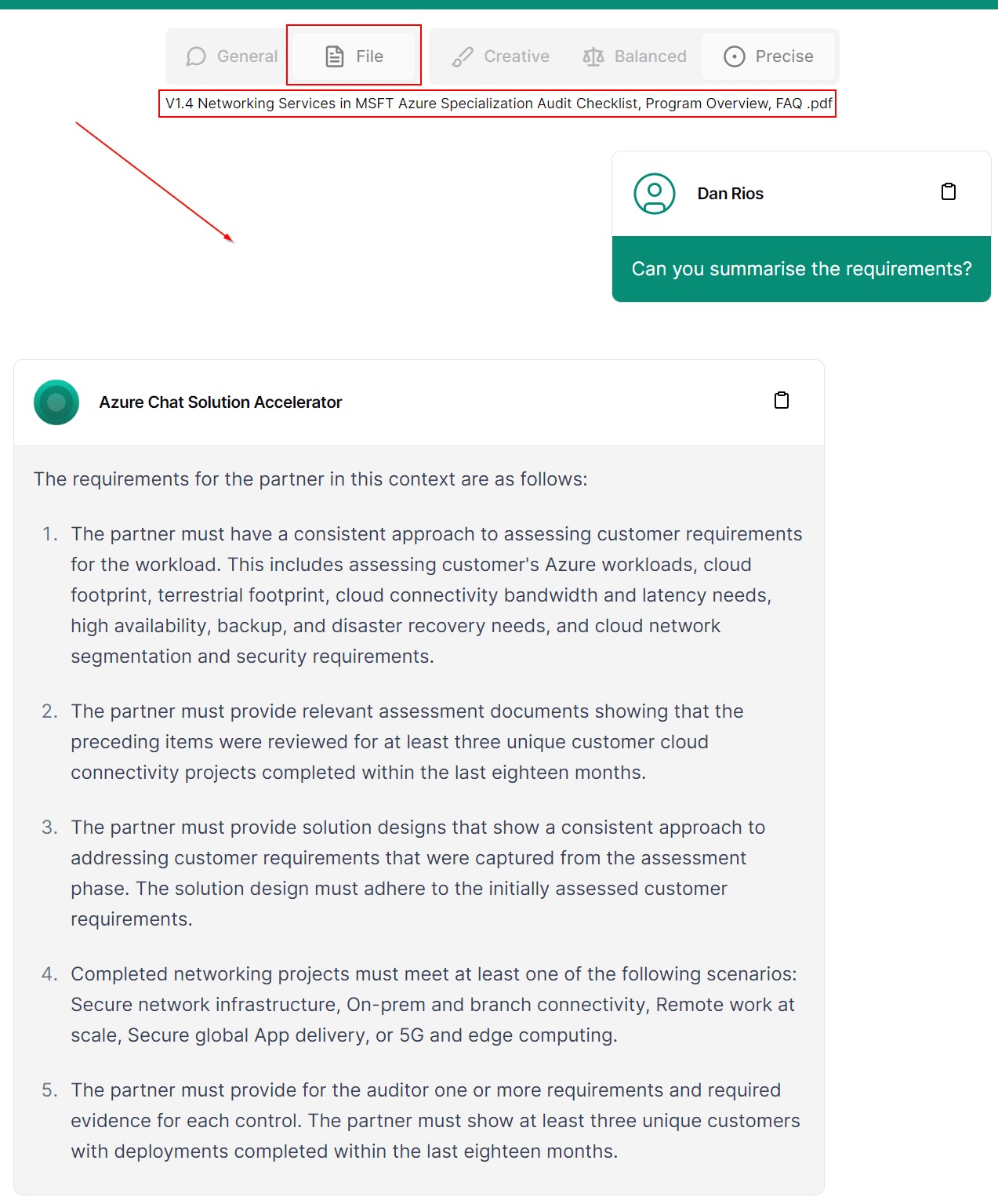

- Directly query PDF & .docx, .xlsx, .pptx and HTML documents in the chat privately:

How much does it cost?

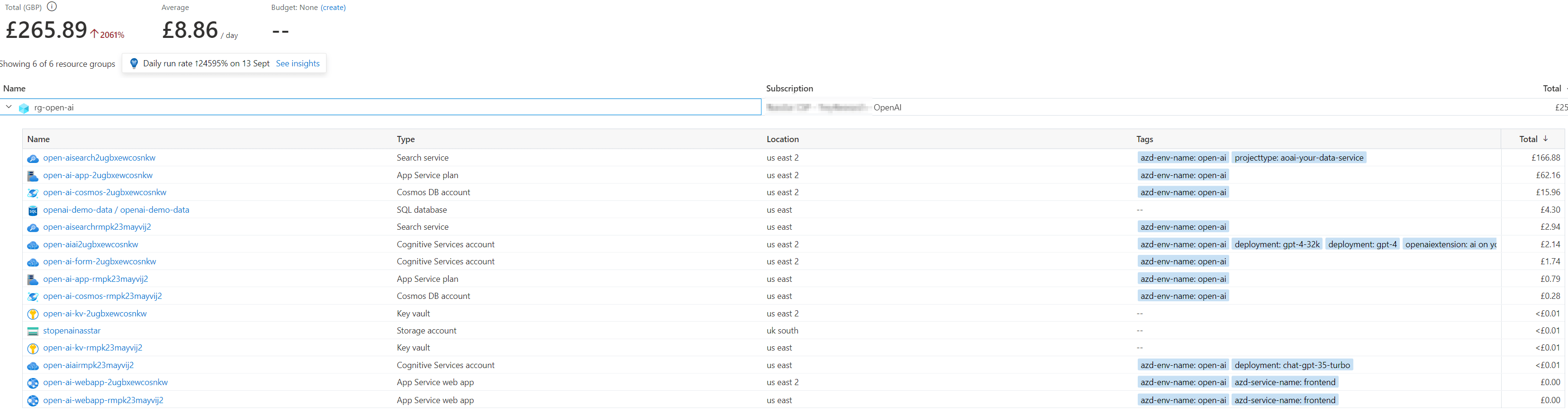

The hardest element is understanding how much Azure OpenAI chat can cost as it’s difficult to estimate due to tokens, completions, search service usage, etc. However, here’s my last 30 days usage which comprises of around 5 users playing around with the chat solution on an infrequent ad-hoc basis. This may help give you a reference starting point at least:

As you can see the largest cost is from the search service in my instance. I have a few extra resources nested in there that I was tested with such as Azure SQL.

Tokens?

Tokens are considered as ‘piece’ fo words. The input of your question put to the GPT model is broken down into tokens and cut up, but a token isn’t directly equal to 1 word – taken from the OpenAI help KB:

- 1-2 sentence ~= 30 tokens

- 1 paragraph ~= 100 tokens

- 1,500 words ~= 2048 tokens

Not just for this particular app, but it would be good to have a feature (and do tell me if there is a way that I’ve missed!) to limit tokens on a per user basis for applications like these. This would give greater control over things like a daily limit of prompts for example.

Useful links for costing Azure OpenAI Chat

Some good information is provided in the GitHub docs here: azurechat/docs/1-introduction.md at main · microsoft/azurechat (github.com)

Whereby you can view an Azure Chat App Sample in the cost calculator to help you gauge some costs, check it out:

Pricing Calculator | Microsoft Azure

As you’re charged for the tokens in ChatGPT I’ve found the below tokenizer tool useful in understanding a baseline for X amount of works being X tokens:

Lastly, the Azue OpenAI service costs are laid out on the pricing page which can be found here: Azure OpenAI Service – Pricing | Microsoft Azure